The idea that children go “online” is already outdated.

For most young people today, there is no meaningful distinction between online and offline life. Their friendships unfold across group chats, their sense of identity is shaped in digital spaces, and their understanding of the world is filtered through algorithms that respond faster than any adult can track.

This shift has happened quietly, but its implications are profound.

Much of the conversation around children and technology still revolves around screen time — how many hours, how often, and how late. It is a reassuringly simple metric. It can also be the wrong one.

What matters is not only how long children are on their devices, but also what they encounter there, how they interpret it, and whether they have the knowledge to respond safely.

That is a more complex problem — and one that cannot be solved through restrictions alone.

Children today are navigating an environment defined by speed, scale and sophistication. Artificial Intelligence (AI) can generate convincing images, voices and narratives. Content is personalised and persistent. Misinformation sits alongside fact, often indistinguishable without context. The result is a world in which children are constantly interpreting signals, many of which they are not developmentally equipped to fully understand.

In this environment, risk rarely appears as a single, obvious event. It accumulates. It begins with something ambiguous — a message that feels slightly off, a video that is difficult to process, a conversation that shifts in tone. These are the moments children often keep to themselves. Not because they lack trust, but because they fear losing access, independence or control.

This is where much of the current approach to digital safety can fall short.

A model built primarily on monitoring and restriction assumes that risk can be contained through oversight. Children’s digital lives are too fluid, too private and too embedded in their social world for that to be consistently effective. When control becomes the dominant strategy, children become more adept at concealing their experiences rather than sharing them.

What protects children is something less visible, and far more difficult to measure: their willingness to speak.

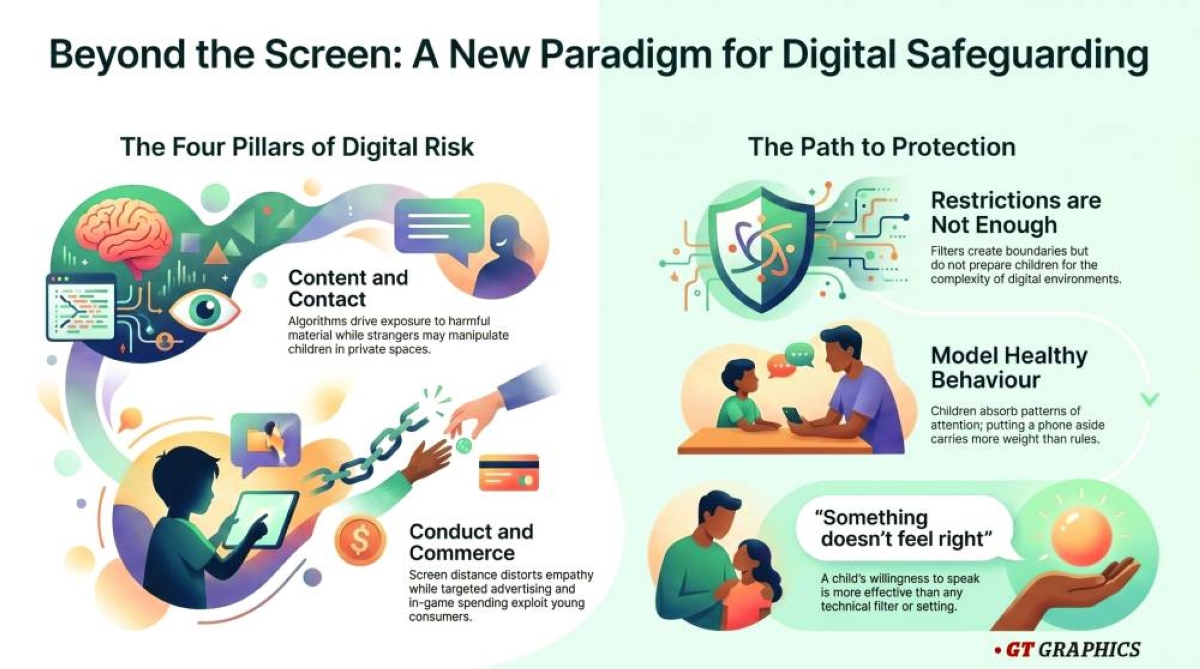

Understanding the risks they face can help clarify what that protection needs to look like. These risks tend to fall into four broad, overlapping areas.

Content – what children encounter. This includes explicit material, misinformation, and content that normalises harmful behaviours or unrealistic standards. Exposure is often unintentional, driven by algorithms rather than choice. The challenge lies in helping children question what they see and recognise when something feels wrong.

Contact – who children interact with. Digital platforms allow strangers to present themselves as peers and to move conversations quickly into private spaces. Some interactions remain harmless. Others involve manipulation, coercion or exploitation. Children need the confidence to disengage and the clarity to recognise when an interaction is unsafe.

Conduct – how children behave online. The distance created by screens can distort judgement. Messages escalate quickly. Images are shared without fully understanding their permanence or reach. These moments reflect a need for guidance around empathy, responsibility and consequence.

Commerce – how children engage as consumers. Online environments are designed to encourage spending and sharing. In-game purchases, targeted advertising, phishing and scams are part of that ecosystem. Children may not recognise when they are being influenced or exploited.

These risks do not operate in isolation. They intersect and reinforce one another, often in ways that are difficult to detect from the outside.

The response requires a shift in emphasis.

Practical tools – parental controls, privacy settings, screen-time limits – remain useful, particularly for younger children. They create structure. They signal boundaries. But they do not, on their own, prepare children for the complexity of the environments they inhabit.

Preparation comes from something else: conversation, modelling and trust.

Children watch how adults use technology. They absorb patterns of attention, behaviour and response. A phone placed aside during a conversation carries more weight than a rule about screen use. A calm discussion about something unsettling online creates space for future disclosure.

Digital literacy has also taken on a new urgency. Children need to understand how content is created, why it appears to them, and how tools such as artificial intelligence can both inform and mislead. This does not require technical expertise from parents. It requires openness – a willingness to explore, question and learn alongside the children.

Safeguarding, in this context, becomes less about controlling exposure and more about building knowledge and capability.

Children will encounter risk. That is inevitable in a connected world. What matters is whether they can recognise it, respond to it and, critically, speak about it.

The most effective safeguard is not a filter or a setting.

It is a relationship in which a child knows they can say, “Something doesn’t feel right,” and be heard.

In a world that is always on, that may be the only protection that consistently holds.

• Claire Scowen is the Vice-President of Risk and the Global Lead of Safeguarding and Child Protection at GEMS Education.